A B split testing is the fastest way to improve conversion rate when you treat it like a controlled experiment, not a guessing game. Instead of redesigning everything at once, you change one thing, measure the impact, and keep only what proves it can sell. That discipline matters even more in influencer marketing, where creative fatigue, audience mismatch, and tracking gaps can hide what is actually working. In this guide, you will learn a practical workflow to plan, launch, measure, and scale tests across landing pages, paid social, and creator content. Along the way, we will define the metrics and deal terms that affect performance so your results stay comparable. Finally, you will get templates, tables, and example calculations you can reuse on your next campaign.

A B split testing basics: what it is and what it is not

At its core, A B split testing compares two versions of an experience to see which one drives a better outcome. Version A is the control, and version B is the variant with one deliberate change. The outcome is usually a conversion event such as purchase, sign up, add to cart, or lead form submit. The key rule is isolation: if you change headline, hero image, and pricing all at once, you cannot tell what caused the lift. In practice, many teams run what looks like a test but is really a before and after comparison, which is vulnerable to seasonality, ad delivery shifts, and audience changes. Treat your test as a mini scientific study and you will make fewer changes, but you will make the right ones.

Before you test, define the measurement language you will use across ads, landing pages, and influencer posts. Here are the core terms, explained in plain English so you can apply them immediately:

- Impressions: total times an ad or post was shown. One person can generate multiple impressions.

- Reach: unique people who saw the ad or post at least once.

- Engagement rate: engagements divided by reach or impressions (choose one and keep it consistent). Example: 500 likes plus comments plus saves on 50,000 reach = 1% engagement rate.

- CPM (cost per mille): cost per 1,000 impressions. Formula: CPM = (Spend / Impressions) x 1000.

- CPV (cost per view): cost per video view (definition varies by platform, so document it). Formula: CPV = Spend / Views.

- CPA (cost per acquisition): cost per conversion. Formula: CPA = Spend / Conversions.

- Whitelisting: the brand runs paid ads through a creator handle (also called creator licensing). It can change performance because the “from” line is the creator, not the brand.

- Usage rights: permission to reuse creator content on your channels, in ads, or on your site, usually for a time window.

- Exclusivity: a restriction that prevents the creator from working with competitors for a period. It affects pricing and can affect results if the creator audience expects variety.

Concrete takeaway: write these definitions into your test plan so your team does not compare apples to oranges when reporting results.

Pick one conversion goal and one primary metric

Good tests start with a single conversion goal. If you optimize for purchases but judge success on click through rate, you will end up with “winning” creative that attracts curiosity, not buyers. Choose one primary metric that matches your goal and one secondary metric that helps you diagnose why. For ecommerce, the primary metric is often conversion rate or CPA, while secondary metrics might include add to cart rate, average order value, and bounce rate. For lead gen, the primary metric is cost per lead or lead conversion rate, with secondary metrics like form completion rate and time on page. Once you lock this in, you can run multiple tests without moving the goalposts.

Use a simple decision rule to keep the program moving. For example: “We ship the winner if it improves conversion rate by at least 5% with no more than a 10% increase in CPA.” That rule prevents endless debates when results are mixed. If you work with creators, also decide where the conversion is credited: last click, first click, or blended attribution. For a deeper measurement mindset, you can browse practical analytics and testing topics in the InfluencerDB.net blog and align your reporting format with how stakeholders already read performance.

Concrete takeaway: write your primary metric, secondary metric, and ship rule in one sentence at the top of every test brief.

Build a hypothesis that tells you what to change

A hypothesis is not “let’s test a new landing page.” A useful hypothesis names the audience, the change, and the expected behavior shift. Here is a clean template: “For [audience], changing [element] from [A] to [B] will increase [metric] because [reason].” The “because” matters because it forces you to articulate a mechanism, which later helps you learn even if the test fails. In influencer campaigns, the mechanism might be trust, clarity, urgency, or relevance. For instance, creator audiences often respond to specificity, so a hypothesis could be that adding a clear price anchor and shipping timeline will reduce hesitation.

Keep your variable list tight. If you are testing creator whitelisting ads, you might change only the hook in the first two seconds while keeping the rest of the video identical. If you are testing a landing page, you might change only the headline and subhead while keeping the offer, imagery, and layout stable. When you need bigger changes, sequence them as a series of tests rather than one giant redesign. That way, you can bank incremental wins and avoid shipping a “winner” that only worked due to a temporary traffic mix.

Concrete takeaway: if you cannot point to the single element that differs between A and B, you do not have a clean test yet.

Test design that avoids false winners

Most testing mistakes come from weak design, not weak ideas. Start by ensuring randomization and consistency: the same audience criteria, the same time window, and the same conversion event definition. Next, decide whether you are running a true split (50/50) or a weighted test (for example 80/20) to reduce risk. True splits are better for speed and statistical confidence, while weighted tests are safer when you are testing a risky offer or a new checkout. Also, avoid overlapping tests that touch the same part of the funnel, because they can interact and blur results.

Traffic quality is another silent killer. If variant B gets more high intent traffic because the ad platform optimized delivery differently, your “lift” is not real. To reduce this, keep targeting identical, lock placements if possible, and monitor frequency and reach. On landing pages, run A and B simultaneously rather than sequentially. If you must run sequentially due to tooling limits, keep the window short and avoid major promo periods. For statistical confidence, use a calculator and pre decide your minimum detectable effect, but do not let math become an excuse to never ship. If you want a practical overview of how Google frames experimentation concepts, see Google’s A/B testing guidance.

Concrete takeaway: run tests simultaneously, keep targeting consistent, and pre decide your minimum lift threshold before you look at results.

| Testing scenario | Best primary metric | Secondary diagnostic metric | Common confounder to watch |

|---|---|---|---|

| Landing page headline | Conversion rate | Bounce rate | Traffic source mix changes |

| Checkout flow change | Completed purchases | Cart abandonment rate | Payment method availability |

| Paid social creative hook | CPA | Thumb stop rate or 3 second view rate | Ad delivery optimization bias |

| Creator script angle | Revenue per 1,000 views | Link click rate | Audience fit and posting time |

| Offer test (discount vs bundle) | Gross profit per visitor | Average order value | Margin differences between offers |

Quick setup: a 60 minute A B split testing workflow

You can launch a clean test in about an hour if you follow a repeatable checklist. First, write the hypothesis and ship rule in two lines. Second, confirm tracking: pixel events, UTMs, and a clear conversion definition in your analytics tool. Third, create the variant with one change only, and name it in a way that makes reporting obvious, such as “LP Headline – Benefit First” versus “LP Headline – Feature First.” Fourth, set your split and start time, and schedule a midpoint check to confirm data is flowing. Finally, document what you will do if the test breaks, such as reverting to control or pausing spend.

Here is a simple tracking stack that works for most influencer and paid social teams: UTMs for every link, platform pixel for conversion events, and a lightweight dashboard that shows spend, impressions, reach, clicks, conversions, revenue, and CPA. If you are using creator whitelisting, treat it like a separate channel because the delivery and trust dynamics differ. Also, capture deal terms that affect performance, including usage rights window, exclusivity, and whether the creator can edit the script. Those details help you explain why one variant scaled and another did not.

Concrete takeaway: if you cannot trace a conversion back to a variant using UTMs and event tracking, fix measurement before you test creative.

| Step | What to do | Owner | Output |

|---|---|---|---|

| 1 – Define goal | Pick one conversion event and one primary metric | Marketing lead | Test brief header |

| 2 – Hypothesis | Write audience, change, expected lift, and reason | Growth marketer | One sentence hypothesis |

| 3 – Tracking | Confirm pixel events, UTMs, and attribution window | Analyst | Tracking checklist complete |

| 4 – Build variants | Create B with one change, keep everything else identical | Designer or editor | Variant URL or ad ID |

| 5 – Launch | Split traffic, set budget, record start time | Media buyer | Live experiment |

| 6 – QA | Check events, links, and load speed within 2 hours | Analyst | QA notes |

| 7 – Decide | Apply ship rule, document learnings, plan next test | Team | Decision log entry |

How to read results: simple formulas and an example

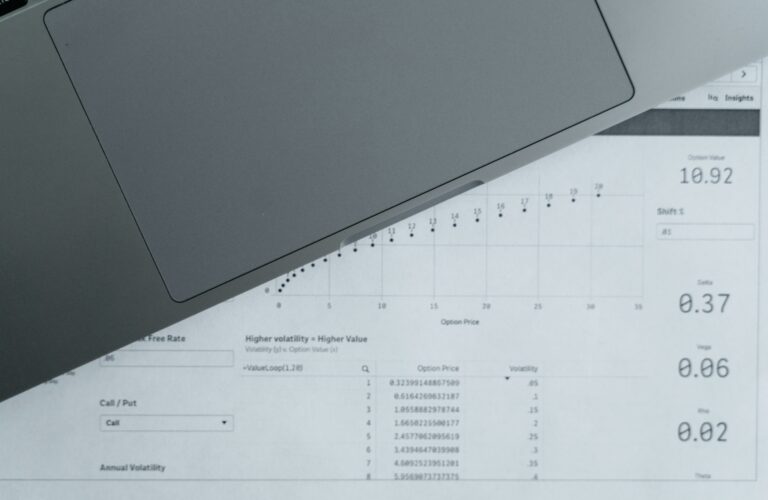

To keep decisions grounded, calculate a few basics the same way every time. Conversion rate = Conversions / Visitors. Lift percentage = (B – A) / A. CPA = Spend / Conversions. Revenue per visitor = Revenue / Visitors. If you are comparing creator posts, you can also compute revenue per 1,000 impressions to normalize for reach differences: RPM = (Revenue / Impressions) x 1000. These formulas are simple, but they stop teams from overreacting to vanity metrics.

Example: Variant A gets 10,000 visitors and 300 purchases, so conversion rate is 3%. Variant B gets 10,200 visitors and 357 purchases, so conversion rate is 3.5%. The lift is (3.5% – 3%) / 3% = 16.7%. Now check efficiency: if A spent $9,000 for 300 purchases, CPA is $30. If B spent $9,500 for 357 purchases, CPA is $26.61. Even if B had slightly higher spend, the lower CPA and higher conversion rate make it a clear winner. When results are mixed, apply your ship rule and consider profit, not just conversion rate.

One more practical check: look for segment effects. A variant might win on mobile but lose on desktop, or it might perform better for new visitors than returning customers. If you see a strong segment split, you can follow up with a targeted rollout rather than a blanket change. For platform level measurement definitions, Meta’s documentation on ads reporting can help you align view and attribution settings with what you are optimizing for: Meta Business Help Center.

Concrete takeaway: always report conversion rate, CPA, and lift, then add one segmentation cut that matches your biggest traffic source.

Influencer programs are full of testable levers, but you need to control what you can. Start with creator selection tests: run the same brief and offer across two creators with similar audience size, then compare CPA and revenue per 1,000 views. Next, test creative angles: keep the creator constant and change only the first line of the script, the product demo sequence, or the call to action. You can also test landing page continuity by matching the creator’s phrasing on the landing page headline, which often improves conversion because it reduces cognitive friction. When you do this, document whether the creator had full creative control or followed a strict script, since that affects repeatability.

Paid social adds additional options. You can test whitelisting versus brand handle ads, or test different cuts of the same creator video. Keep usage rights and licensing clear so you can legally run the winning creative at scale. Also, watch frequency: a creative can look like a winner early, then collapse as the audience sees it too often. A practical rule is to monitor CPA by frequency bucket and refresh the hook when performance degrades. If you want more ideas on structuring experiments across channels, the is a good place to build a testing backlog based on real campaign patterns.

Concrete takeaway: in influencer testing, control the offer and landing page first, then test creator and creative variables one at a time.

Common mistakes that waste weeks of testing

The most common mistake is changing too many variables at once, then declaring victory without knowing why. Another frequent issue is stopping tests too early because the first day looks good, even though early results are noisy. Teams also misread significance by focusing on percent lift without checking sample size, which can produce false winners. In influencer campaigns, a classic error is comparing creators with different audience demographics or different posting formats, then attributing the difference to “content quality.” Finally, many programs fail to log learnings, so they repeat the same tests every quarter.

- Do not end a test based on one day of data unless you have an emergency stop rule.

- Do not compare variants that ran in different promo periods without adjusting expectations.

- Do not judge creator performance without normalizing for reach and impressions.

- Do not ignore deal terms like exclusivity and usage rights when scaling a winner.

Concrete takeaway: if you are not keeping a decision log, you are not building a testing program, you are just running isolated experiments.

Best practices to increase conversion rate faster

Speed comes from focus and repetition. Maintain a backlog of test ideas ranked by expected impact and effort, and always keep one test running on your highest traffic funnel step. Use a naming convention for variants so results are searchable later. In addition, standardize your creative inputs: one brief template, one offer sheet, and one landing page structure, then test small improvements inside that system. When a test wins, roll it out quickly, but keep monitoring because performance can regress when you scale spend or broaden targeting. Most importantly, treat every test as a learning asset, not just a number.

- Prioritize high leverage pages: product page, checkout, and lead form pages usually beat low traffic blog pages for ROI.

- Test clarity before persuasion: shipping, pricing, returns, and what is included often lift conversion more than clever copy.

- Keep creative and landing page aligned: repeat the same promise in the first screen of the landing page.

- Scale winners with guardrails: increase budget in steps and watch CPA, not just volume.

Concrete takeaway: run fewer, cleaner tests and ship more winners by using a backlog, a brief template, and a consistent decision rule.

A simple testing plan you can copy for the next 30 days

To make this real, here is a straightforward 30 day plan that fits most teams. Week 1: test a landing page headline that mirrors your top performing ad or creator hook. Week 2: test one offer element, such as free shipping threshold versus a small discount, and measure profit per visitor. Week 3: test a creator whitelisting ad against the same creative on the brand handle, keeping targeting identical. Week 4: test a checkout trust element, such as adding delivery estimates and returns clarity above the fold. Each week, log the hypothesis, results, and next action so the program compounds.

If you only remember one thing, remember this: A B split testing works when you respect the basics – one change, one goal, clean tracking, and a decision rule. Do that consistently and conversion rate improvement becomes a process, not a lucky break.