A B testing is the fastest way to turn “we think” into “we know” when you want to double your conversion rate without doubling spend. Instead of redesigning everything, you run controlled experiments that isolate one change at a time and measure the lift. That discipline matters even more in influencer and social traffic, where creative fatigue, audience mismatch, and platform delivery can hide what is actually working. In this guide, you will get a practical test menu, a setup framework, and decision rules you can use this week. Along the way, we will define the metrics and deal terms that affect conversion so your experiments reflect real business outcomes.

A B testing basics – what to measure and what to control

Before you pick tests, lock down language and measurement so you do not “win” an experiment that cannot be repeated. Conversion rate (CVR) is the share of visitors who complete a goal: purchase, lead, install, or signup. Reach is the number of unique people who saw content, while impressions count total views including repeats. Engagement rate is typically engagements divided by impressions or reach, but you must define which one you use because it changes the story. CPM is cost per thousand impressions, CPV is cost per view (often video views), and CPA is cost per acquisition (a completed conversion). In influencer campaigns, whitelisting means running paid ads through a creator’s handle, usage rights define how you can reuse content, and exclusivity restricts the creator from working with competitors for a period.

To make experiments trustworthy, control what you can. Keep the same offer, same landing page speed, and the same attribution window while you test a headline or a call to action. If you change both creative and pricing at once, you will not know what caused the lift. Also, decide your primary metric upfront: for ecommerce it is often purchase CVR or revenue per session; for lead gen it might be qualified lead rate, not just form submits. If you need a refresher on campaign planning and measurement concepts, the InfluencerDB Blog guides on influencer strategy and analytics are a good starting point.

Set up your experiment like a pro – hypothesis, sample size, and guardrails

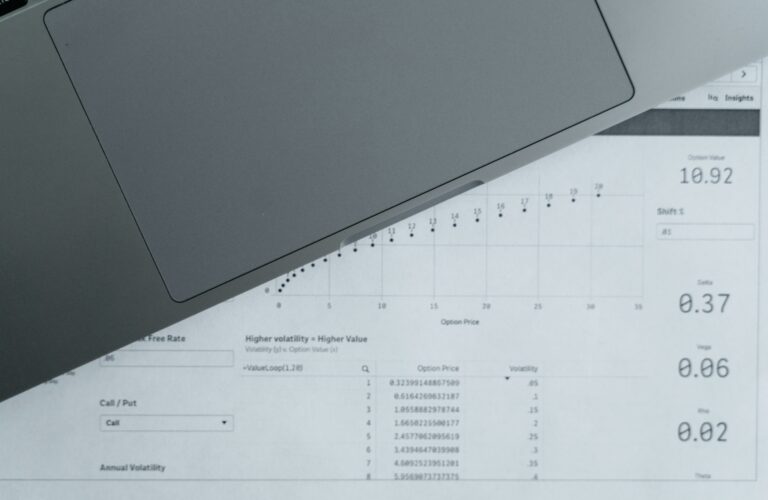

Good tests start with a hypothesis that names the audience, the change, and the expected outcome. Use this template: “For [audience], changing [element] from [A] to [B] will increase [metric] because [reason].” Next, define your minimum detectable effect (MDE), the smallest lift worth acting on. If your baseline CVR is 2.0% and you need at least a 15% relative lift to matter, your target is 2.3% CVR. That prevents you from celebrating tiny changes that will not move revenue.

Then add guardrails so you do not optimize yourself into a corner. Common guardrails include bounce rate, average order value (AOV), refund rate, and lead quality. For example, a more aggressive discount pop up might raise CVR but lower AOV and increase returns. Finally, commit to a stopping rule: run the test for a full business cycle (often 7 days) and until each variant hits a minimum number of conversions. As a rule of thumb, aim for at least 100 conversions per variant for directional confidence, and more if the lift is small.

Simple math helps you estimate impact and prioritize. Use these formulas:

- Conversion rate (CVR) = conversions / sessions

- CPA = spend / conversions

- Incremental conversions = sessions x (CVRB – CVRA)

- Incremental profit = incremental conversions x profit per conversion

Example: You get 50,000 sessions per month to a product page, baseline CVR is 2.0% (1,000 orders). A test lifts CVR to 2.4% (1,200 orders). Incremental conversions are 50,000 x (0.024 – 0.020) = 200. If profit per order is $18, incremental profit is $3,600 per month. That is the kind of back of the envelope calculation that keeps your roadmap honest.

High impact A B testing ideas for landing pages and checkout

If your traffic is already coming in, landing pages and checkout are where conversion rate is won or lost. Start with tests that reduce friction or increase clarity, because they tend to generalize across channels. First, test your value proposition above the fold: a tighter headline, a clearer subhead, and one primary call to action. Next, test trust signals near the CTA, such as shipping and returns, warranty, secure checkout badges, and review counts. If you sell a high consideration product, test a comparison table or “as seen in” proof, but keep it specific and verifiable.

Checkout tests should focus on removing steps and surprises. Try guest checkout vs forced account creation, fewer form fields, and clearer error messages. Another reliable lever is payment options: adding Apple Pay, Google Pay, or buy now pay later can lift CVR, especially on mobile. For lead gen, test form length, but also test the question order so the easiest fields come first. When you run these tests, keep an eye on page speed because performance changes can masquerade as copy wins. Google’s documentation on measuring performance is a helpful reference for what “good” looks like: PageSpeed Insights documentation.

| Page area | Test idea | Why it works | Primary metric | Guardrail |

|---|---|---|---|---|

| Hero section | Headline focused on outcome vs feature | Improves message match and clarity | CVR | Bounce rate |

| CTA | “Buy now” vs “Get yours” vs “Start checkout” | Sets expectation and reduces hesitation | Click to checkout rate | AOV |

| Social proof | Star rating and review count near CTA | Builds trust at decision point | CVR | Return rate |

| Checkout | Guest checkout enabled | Removes forced commitment | Checkout completion rate | Repeat purchase rate |

| Payments | Add express pay buttons on mobile | Reduces typing and drop off | Mobile CVR | Fraud rate |

Creator and influencer funnel tests – from hook to click to purchase

Influencer traffic behaves differently from search traffic. People arrive with context from the creator, and they often convert best when the landing page continues the same story. That is why your first influencer focused tests should align creative, offer, and landing experience. Test two hooks in the first two seconds of a short video: problem first vs result first. Then test the CTA style: “Use code MAYA10” vs “Tap to see my routine” vs “Take the quiz.” You can also test where the CTA appears: spoken early, on screen throughout, or only at the end.

Next, test offer framing rather than discount size. For example, “Free shipping today” can outperform “10% off” if your audience is price insensitive but hates surprise fees. If you are running whitelisted ads, test creator handle vs brand handle as the ad identity, because trust can shift dramatically. Also test landing pages built for creator traffic: a dedicated page with the creator’s video, their picks, and a short FAQ often beats a generic product page. Keep the test clean by changing one element at a time, such as the hero video or the offer block, not both.

| Funnel step | What to A B test | How to implement | Success metric | Practical tip |

|---|---|---|---|---|

| Video hook | Problem statement vs transformation | Two edits of the same footage | 3 second view rate, CTR | Keep the first frame visually different |

| CTA language | Code driven vs curiosity driven CTA | Swap end card and caption line | Link clicks, CVR | Match CTA to landing page headline |

| Offer framing | Free shipping vs percent off | Same price, different message | CVR, AOV | Watch margin, not just CVR |

| Landing experience | Creator co branded page vs standard PDP | Duplicate page with creator module | CVR, time on page | Use creator language, not brand slogans |

| Retargeting | UGC testimonial vs product demo | Two ad creatives to same audience | CPA | Exclude purchasers to avoid waste |

Pricing and deal term tests – CPM, CPA, usage rights, and exclusivity

Not every conversion lift comes from creative. Sometimes the biggest lever is how you structure the deal so you can iterate faster and scale winners. Start by understanding the pricing units. CPM is useful for awareness, CPV for video consumption, and CPA for performance outcomes. In influencer work, you will also negotiate usage rights (can you run the content as ads and for how long), whitelisting access, and exclusivity windows. Those terms change your effective cost because they determine how long you can extract value from the asset.

One practical A B test is not on the audience, but on the contract structure: run two similar creators with different compensation mixes and compare blended CPA. For example, Creator A gets a flat fee of $2,000, Creator B gets $1,000 plus a $10 CPA bonus after 50 conversions. If both creators drive 150 conversions, Creator A’s effective CPA is $13.33, while Creator B’s is ($1,000 + $1,000 bonus) / 150 = $13.33 as well. However, the bonus structure reduces your risk if performance is weak and often motivates better CTA placement. The key is to keep deliverables and timing comparable so you are not comparing apples to oranges.

Also test exclusivity only when it is truly needed. A 30 day category exclusivity clause can raise fees, so treat it like an experiment: pay for exclusivity with one creator, skip it with another, and monitor whether competitor posts actually hurt your conversion rate. When you do need rules, write them narrowly by product category and platform to avoid paying for restrictions you do not use.

How to prioritize tests – an impact scoring framework you can reuse

When teams say “we will A B test everything,” they usually test random things and learn slowly. Instead, score tests by expected impact, confidence, and effort. Impact is the size of the lever, confidence is how strong the evidence is, and effort is the time and engineering cost. A simple 1 to 5 score works. Then sort by (impact x confidence) / effort. This keeps your roadmap focused on changes that can plausibly move conversion rate, not just cosmetic tweaks.

Use this checklist to pick your next three tests:

- Start where intent is highest – product pages, checkout, pricing page, lead form.

- Fix obvious friction first – slow pages, broken mobile layouts, confusing shipping info.

- Prefer tests tied to user objections – “Will it fit?”, “Will it arrive on time?”, “Can I return it?”

- Choose one primary metric – CVR or qualified lead rate, then add 1 to 2 guardrails.

- Plan a follow up – every winner should spawn a new test, like CTA plus trust signal placement.

If you are running influencer campaigns, add one more rule: prioritize tests that improve message match between creator content and the landing page. That is where many brands leak conversions, especially when they repurpose UGC into ads without updating the page.

Common mistakes that kill conversion lifts

The most common failure is ending tests early because the graph looks exciting. Early spikes are often noise, especially with influencer bursts that come in waves. Another mistake is changing targeting or budgets mid test, which changes who sees the variants and breaks the comparison. People also forget to QA tracking, so “conversions” are counted differently between variants. If you use UTMs, keep them consistent and verify that your analytics tool attributes both variants the same way.

In influencer programs, a frequent mistake is mixing creators, formats, and offers in one bucket and calling it an A B test. If Creator A is on TikTok with a demo and Creator B is on Instagram Stories with a discount code, you are not testing one variable. Finally, teams sometimes optimize for clicks instead of purchases. High CTR can be a warning sign if the creative overpromises and the landing page underdelivers.

Best practices – how to turn wins into a repeatable system

Document every test in a simple log: hypothesis, screenshots, dates, traffic, results, and what you learned. That prevents you from retesting the same idea six months later. Next, standardize your creative testing process. For example, keep a “control” ad and rotate challengers against it, so you always know what “normal” looks like. In addition, segment results by device and by new vs returning users, because many wins are mobile only.

When you work with creators, bake testing into the brief and the contract. Ask for two hooks, two CTAs, and one alternate thumbnail or cover frame. If you are paying for usage rights, specify that you can edit for length and add captions, because those are the levers you will test in whitelisted ads. For disclosure and ad labeling, follow the FTC’s guidance so your performance data is not built on risky practices: FTC Disclosures 101.

Finally, scale winners carefully. A result that lifts CVR at $50 per day might not hold at $5,000 per day if the platform expands to colder audiences. Increase budget in steps, watch CPA and frequency, and keep a challenger running so you do not get stuck with a fading creative. If you want more test ideas and measurement templates, browse the and adapt the frameworks to your channel mix.

A simple 14 day A B testing plan to double conversion rate

You do not need a quarter long roadmap to see gains. Over 14 days, you can run two sequential tests on your highest intent page and one creative test for influencer traffic. Days 1 to 2: audit your funnel, confirm tracking, and pick one page with enough volume. Days 3 to 7: run a landing page test focused on clarity, like a new headline plus a tighter value prop. Days 8 to 12: run a friction test, like guest checkout or express pay. Days 13 to 14: analyze, document, and turn the winner into the new control.

At the same time, run one creator funnel test: two hooks or two CTAs on the same creator asset, ideally through whitelisting so delivery is comparable. Your takeaway is simple: if you keep the test clean, use guardrails, and prioritize high intent steps, doubling conversion rate becomes a series of manageable improvements rather than a single miracle redesign.